Smart pointers have become the premium choice for memory management in C++. This is everything but odd, when you come to think of it.

They are lightweight, memory management is as tight as it could be and they are very simple to use. The pitfalls exist but they are pretty obvious (such as: no dark magic on the internal pointer...).

And it's transparent! Look, I type "->" and I really access my pointer!

Last but not least, since the TR1, smart pointers are available as part of the STL, making their usage safe and standard compliant.

That being said, I think they are overused.

Taxonomy

Smart pointers can be categorized into two different broad categories, based on their scope of action: local or global.

Scoped smart pointers

A scoped pointer will release the attached resource when exiting the current scope. This may be a function body, a class, or a loop. Copying a scoped pointer to be used outside the scope results in undefined behaviour.

Examples:

- std::auto_ptr (with limitations)

- boost::scoped_ptr

These smart pointers are straightforward and are typically used to manage resources you don't wish to share outside any given considered scope. They were designed to make RAII easier and uniform.

Shared smart pointers

The attached resource will be released when there are no more owners. This is done - most of the time - with references counting: the smart pointer embodies a reference counter that is increased when ownership is taken and decreased when ownership is released. Thus shared pointers can be copied and used outside the instantiation scope.

Examples:

- std::shared_ptr

- boost::shared_ptr

Taking ownership means "getting a copy of the smart pointer object". Whether this is through the copy constructor or the affectation operator makes no difference.

Shared smart pointers are a great way to avoid memory leaks as the resource will be freed as soon as possible.

Should you wish to know more about smart pointers and memory management, I invite you to read this previous post.

Why are they overused?

First and foremost because they are unneeded. In C++ it's easy to avoid dynamic allocation, do it whenever you can.

Does it mean that when you really need a dynamically allocated object, you must use a smart pointer? The answer is no, for at least two reasons : interfaces coupling and performances.

Smart pointers increase coupling

Your interfaces shouldn't return a smart pointer. You don't want to expose your memory management strategy to the outside to prevent the clients from making any assumption. Clients making assumptions are the seed of maintenance hell.

You want the smallest possible contact surface between two interfaces. Using smart pointers increases the surface, therefore you should refrain from using them.

Thus, that kind of interface is to be avoided:

|

1

2 3 4 5 |

class interface

{ void apply thing(sharedptr < obj > o ) ; shared ptr<obj> dothing (shared_ptr <obj > o ) ; } ; |

Instead, prefer:

|

1

2 3 4 5 |

class interface

{ void apply thing(obj &o); obj &dothing (obj &o ) ; } ; |

What's the difference? In the first case you impose a memory management model to your client, in the second you don't. In the first case you impose a dependency on shared_ptr, in the second you don't.

If the underlying objects are dynamically allocated and you cannot pass along references, you might want to consider:

|

1

2 3 4 5 |

class interface

{ void apply thing(obj *o); obj *dothing (obj *o ) ; } ; |

That looks dangerous, but it's not, as long as you document clearly how resources should be released. That way, your client may encapsulate (or not) the provided pointers in the most convenient way for the considered application. You can even provide a proposed encapsulation to your client.

If you force the usage of shared pointers (for example), your client cannot directly wrap the object into a scoped pointer. Or maybe your client has got its own smart pointer class and wants to use it. Or maybe your client prefers to work on raw pointers for efficiency reasons. I'm pretty sure you can come up with reasons of your own.

Wait a minute... Did someone say efficiency reasons?

Smart pointers hurt performances

Surprised? How could increasing and decreasing an integer affect performances on a multi-core multi-gigahertz computer? Am I being obsessed by details? Have I done too much assembly in my life to think straight?

- Concurrency issues

- Even with atomic implementations, you'll get a certain degree of contention. The problem is that you're creating a barrier, preventing the optimal reordering of operations. Of course operations shouldn't be reordered! You don't want your pointer to be modified or copied before the reference counter is properly updated! Unfortunately this translates into a performance hit.

- Size

- A pointer is one word large. On a 64-bit setup a word is 8 bytes large. A smart pointer is easily about 20 bytes large. That's more than a twofold increment.

- Locality

- The references counter may be located in a different cache line or even a complete different page than the pointed resource.

- Complexity

- A simple move operation becomes a decrement/verify/increment/move operation, notwithstanding potential spinning or even locking. Moving a pointer is one instruction. Moving a smart pointer can easily be compiled in a dozen of instructions, including at least one conditional branch that pollutes the branch prediction algorithm of your beloved processor.

Sounds like a terrible picture, isn't it? Fortunately, it's not that bad. The performance penalty really start to appear during the pointer migration season (that's around Fall), when all the pointers go to the south of memory, where it's warmer. Rest of the year it's pretty ok...

Just keep that list in mind for a while and use it as a reference for the rest of the post.

Why are they useless?

Most of the time you are keeping a count you care little about. Who cares how many owners they are if this information is unnecessary to decide when a resource should be released?

A typical example:

- Creation of an object at program startup, encapsulation in a smart pointer

- The encapsulated object is passed along several functions and copied around in various collections

- Each of the above operations requires an atomic and ordered increment and decrement. Every time, the counter is compared against zero to verify if it must be destroyed. The smart pointer is larger than a word, copying the pointer is therefore much slower.

- Before the program exits, the object needs to be destroyed. It will be done automatically when the reference count reaches zero, that is when the scope of the main() function is left. No risk of error.

Now consider:

- Creation of an object at program startup, no encapsulation.

- The object's pointer is passed along several functions and copied around in various collections.

- The above operation only requires moving one word.

- Before the program exits, the object needs to be destroyed. A call to delete is performed. Small risk of error.

What happens here is that we have used a shared pointer when a scoped pointer was enough. That mistake is made more often that not, and that's really a waste of cycles. Think green!

I love the smell of benchmarks in the morning

We've done enough thinking for today, let's measure!

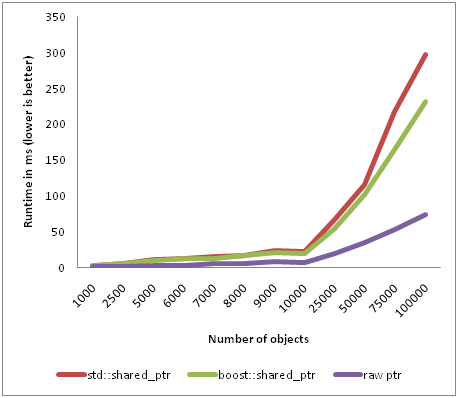

I wrote a test program, that spends most of its time playing with smart pointers. It's not intended as a real world benchmark to measure the impact of smart pointers on performances, it's here to prove that they are not so harmless.

The benchmark has been run on the infamous 7/7 computer, with 8 threads and compiled with Visual Studio 2010 Professional Beta 1. You can get the source code here (yes I know, it doesn't do anything sensible with the data).

The results speak for themselves:

Two conclusions:

- boost::sharedptr is doing better than std::sharedptr

- raw pointers obliterate smart pointers when the number of objects increases

Why so much difference? Well, remember our little list, with raw pointers you're not doing anything when passing a pointer along. Going faster is just a question of doing less!

I let you imagine the overhead of having a program where "everything is smart pointed". Not only are you going to fatigue the memory allocator, but you're wasting time with counters updates when you actually really don't care about how many owners an object has!

Some sort of conclusion

Most of the techniques you know have their limitations and, as an engineer, your work is to make sure you use the best tool for the job. Don't be lazy in using the same technique over and over because "it works most of the time".

Smart pointers helped C++ developers to crush very nasty memory management problems, especially the typical "I have an object that moves around my program and it would be nice to free it when no one uses it anymore".

The thing is, that the more objects you have, the more expensive they become. Keep that in mind and you might be able to increase the performances of your program further!